Project Overview

Uninote is a meeting intelligence product focused on recording online meetings, capturing transcripts, and turning conversations into reusable team knowledge. The product spans a browser-based web app, backend APIs, calendar integrations, storage pipelines, and an automated recording bot that can join meetings and process the resulting media.

I worked on the project as a Full-stack Developer from June 2025 to February 2026, contributing across the React frontend, FastAPI backend, and supporting services around recording, transcription, and meeting workflows.

Link to the product: Uninote

Tech Stack

- Frontend: React 19, TypeScript, Vite, Material UI, Jotai

- Backend: Python, FastAPI, SQLAlchemy, PostgreSQL

- Realtime / Media: WebSocket transcription, browser-based meeting recording, transcription services

- Automation: TypeScript, Playwright, meeting recording bot

- Platform & Infra: AWS S3, AWS services for storage and orchestration, Docker, Terraform

- Integrations: Google / Microsoft calendar flows, Stripe, browser extension support

Product Context

Meeting products look simple on the surface, but the real engineering work sits in the edges:

- joining the right meeting at the right time

- handling unreliable browser and media conditions

- storing large recording artifacts safely

- streaming or processing transcripts with acceptable latency

- presenting all of that back to users in a clear product experience

That makes it a strong full-stack project because the frontend experience depends directly on backend workflows, external integrations, and background automation behaving correctly.

What I Worked On

My contributions were across full-stack areas such as:

- building and improving frontend screens for meeting, transcript, and collaboration workflows

- supporting backend endpoints and domain logic for recordings, meetings, sharing, and integrations

- working around calendar-connected flows and scheduled recording behavior

- contributing to systems that connect recording artifacts, transcript data, and product UI

- helping maintain product flows that span multiple services instead of a single monolith

Key Engineering Decisions

1. Split the product by responsibility

The architecture is intentionally separated into a user-facing web app, a backend API, and a recording bot. That separation makes sense because meeting capture has very different runtime needs from normal CRUD features. The app can focus on management and presentation, while the recording service handles browser automation and media capture.

2. Use WebSocket-style realtime channels where polling would feel weak

For transcription and live status, a request/response pattern is not enough. Realtime channels are a better fit when users expect ongoing updates rather than occasional refreshes. That keeps the product feeling alive during recording and processing flows.

3. Keep large media handling outside normal app requests

Recording files are too large and too failure-prone to treat like standard form submissions. Using dedicated upload flows and cloud storage is the practical decision because it reduces load on the API layer and makes retries or processing stages easier to manage.

4. Separate platform-agnostic logic from platform-specific meeting handling

Recording across Google Meet, Teams, and Zoom creates a portability problem. A useful pattern is to keep shared orchestration logic centralized while isolating platform-specific selectors, behaviors, and exit conditions. That reduces the blast radius when one meeting platform changes its UI or join flow.

Full-Stack Challenges

- Cross-platform meeting capture: browser automation is inherently fragile when external UIs keep changing

- Realtime state sync: the UI has to reflect recording and transcription state without confusing users

- Calendar-driven workflows: scheduling and auto-join behavior depend on reliable integrations

- Media lifecycle management: recordings, transcripts, summaries, and permissions all need to line up

- Access and collaboration: meeting sharing, invitations, and organization features add complexity beyond raw recording

Outcome

Uninote is the kind of project that demonstrates more than UI work or API work in isolation. It shows product engineering across user interfaces, backend services, integrations, automation, and media pipelines. That is exactly the kind of full-stack complexity I want my portfolio to reflect.

Lessons Learned

- Recording and transcription products are mostly about reliability at the integration boundaries

- Good product UX depends on accurate status modeling for background and realtime processes

- Automation services should be isolated so failures do not leak unnecessary complexity into the main app

- Full-stack work becomes more valuable when you can reason across frontend flows, backend contracts, and background workers together

Attachments

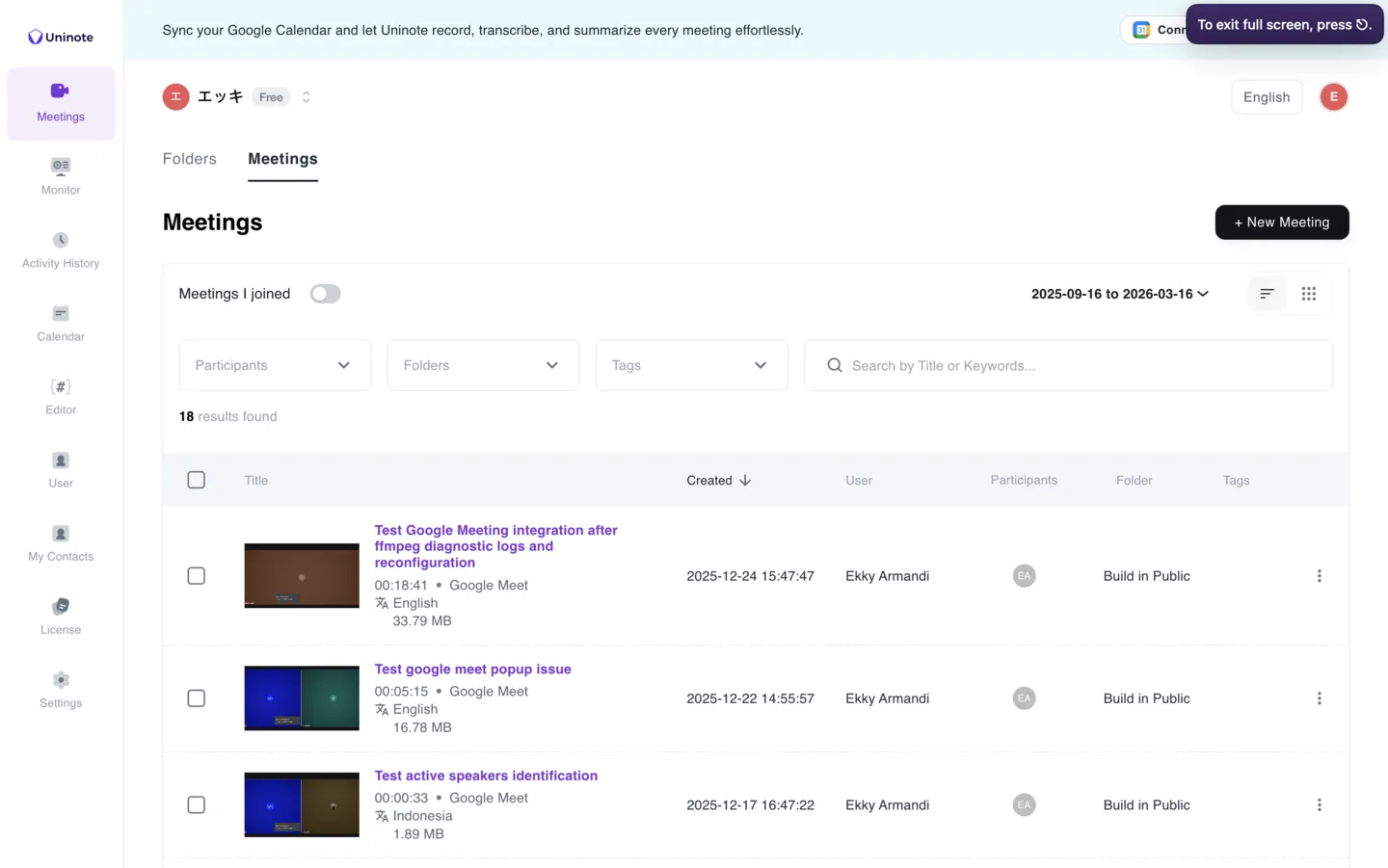

Meeting List / Workspace

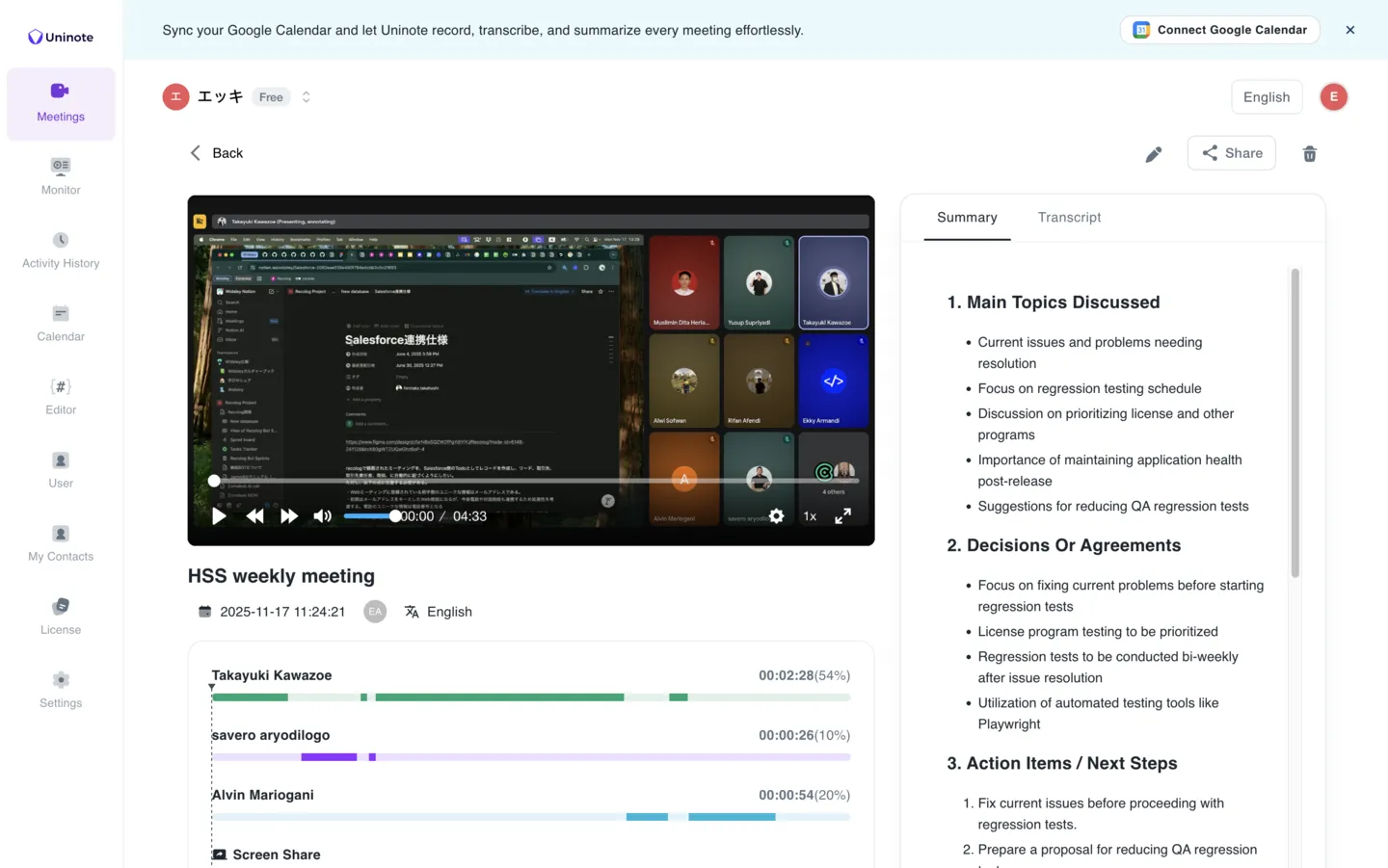

Meeting Detail / AI Summary

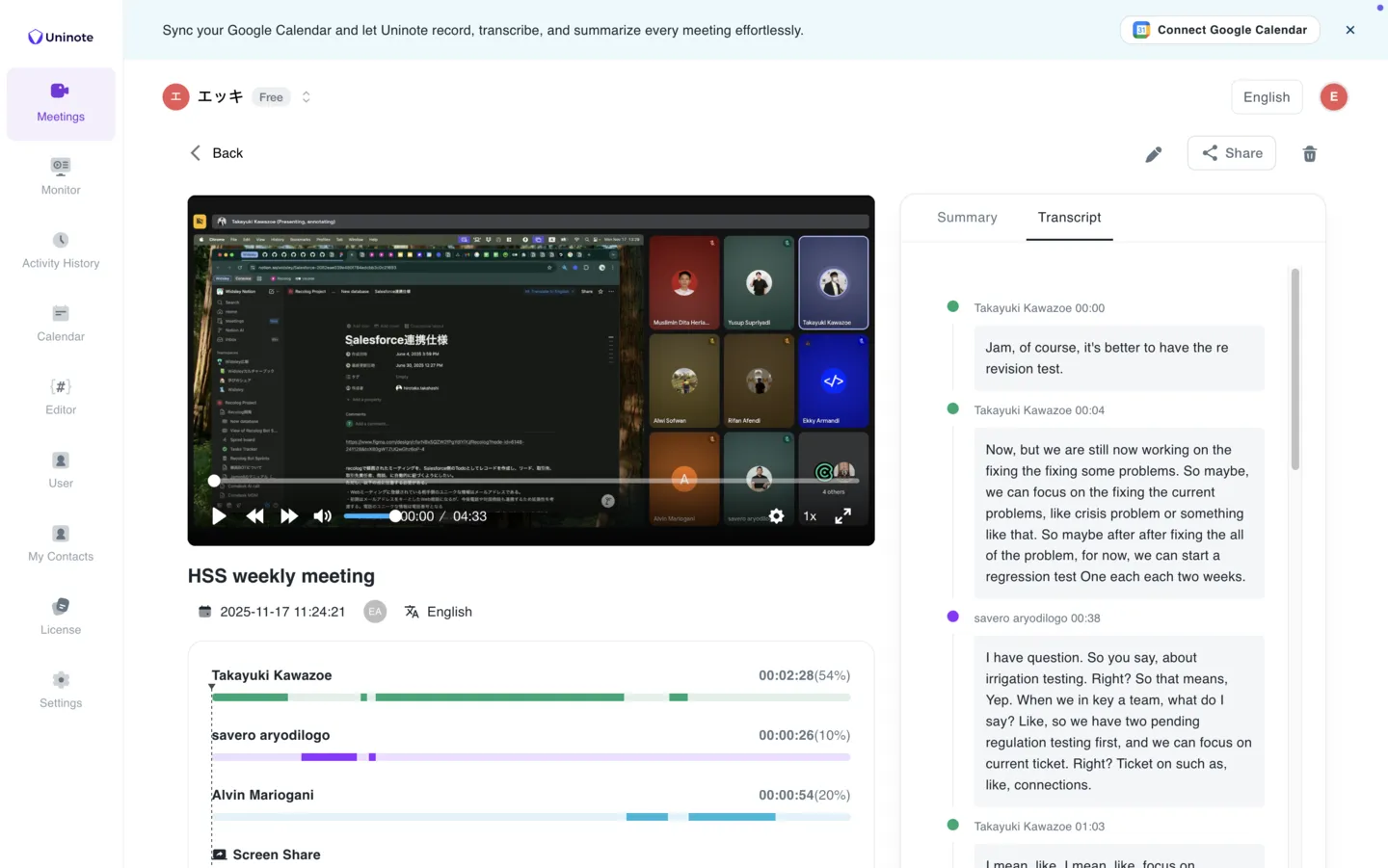

Meeting Detail / Transcript View